Key takeaways ✨

|

At Litmus, we’re constantly testing emails. It’s kinda what we’re known for 😉. Most of the time, we’re talking about pre-send testing—previewing your email on hundreds of email clients and devices to see how it appears before your subscribers receive it.

But there’s another type of testing that’s almost as important: A/B testing.

This takes place after you send your email and is usually done through your email service provider (ESP).

If you’re tired of making email marketing decisions based on hunches (or trying to get buy-in for your plans), then running an A/B test can give you more insight into how your audience responds to different design, copy, and strategic choices. With a bit of foresight and planning, you can turn your gut feelings and ideas into real data to share with the entire team. In this post, we’ll cover:

Table of contents

- What is email A/B testing?

- Why A/B testing fuels better email decision-making

- How to dodge common A/B testing mistakes

- How to set up and run efficient A/B tests

- What elements to A/B test in your emails

- When you should NOT run an A/B test

- How popular brands run A/B tests

- Popular email marketing A/B testing tools

- Before you launch your first email A/B test

- Take the guesswork out of your testing strategy with Litmus

What is email A/B testing?

Email A/B testing, also known as split testing, is the process of creating two versions of the same email with one variable changed, like swapping out a subject line or applying behavioral targeting. Then, you send that email to two subsets of an audience to see which version performs best.

In other words: email A/B testing pits two emails against each other to see which is superior. You can test elements that are big or small to gain customer insights that help you do things like:

- Update your email design.

- Learn about your audience’s preferences.

- Improve email performance.

You don’t always have to A/B test your emails, says Camila Espinal, Email Marketing Manager at Validity. “A/B testing is a great choice when engagement is your primary goal,” she says. “You’ll want to choose campaigns with large audiences, like your newsletter or a promotional send, and it should be a repeatable campaign where those insights can be applied again.”

A/B testing fuels better decisions for email marketers

It’s an extra step to add A/B testing to your email production process, but it’s a worthwhile one if you’re trying to maximize revenue from your email marketing program. Says Espinal, “It gives you the data you need so you can step away from taking a shot in the dark and use real information to sharpen your campaigns, improve engagement, and connect with your audience. You can definitively shift away from what doesn’t work so you’re no longer wasting your time creating campaigns that your customers or prospects aren’t interested in, and instead double down on the things that resonate with them.”

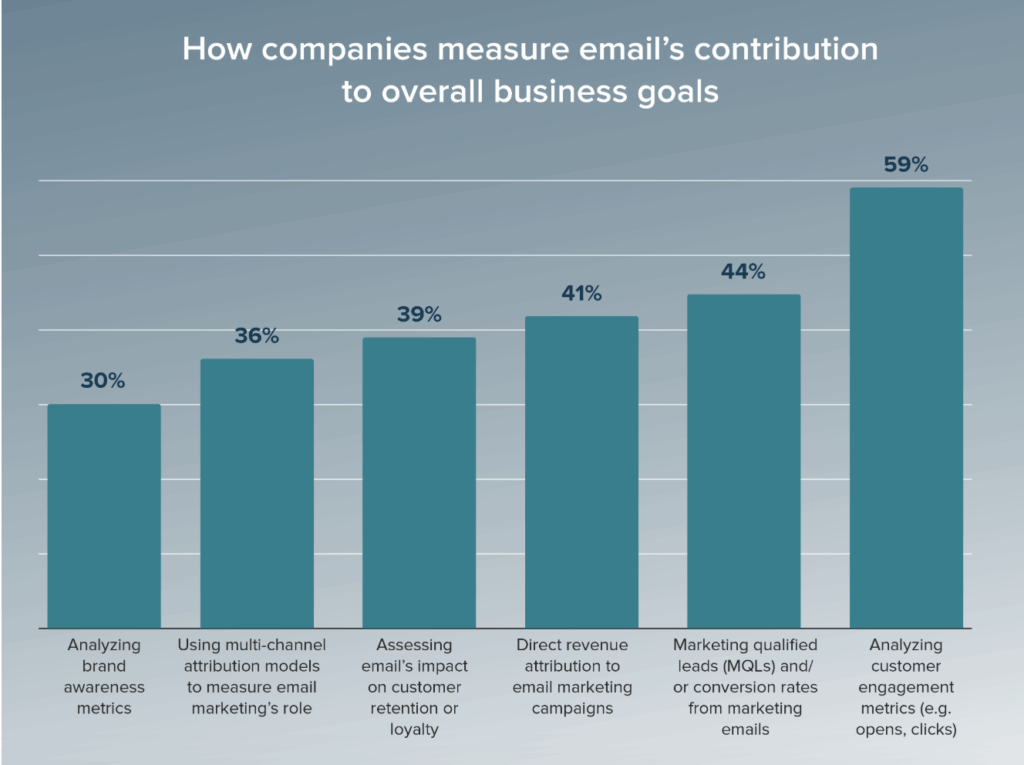

It adds a scientific element to your email marketing process so you can prove your email ROI instead of hoping for the best. When we asked email marketers how they improved the already-great 36:1 ROI from email marketing, 12% cited A/B testing as a key part of their strategy.

“Sometimes, you can get really stuck on ‘Oh, it’s what we’ve always done,’” adds Espinal. “One of the best parts of email marketing is having the opportunity to try something new, and use data to measure that impact.”

A/B testing starts with coming up with a clear hypothesis: What do you think will happen if you change element X? From there, you can test it out and take that information to move forward with a new design or strategy that can dramatically impact your bottom line.

Espinal adds, “You don’t know what works until you try.”

Email trends that truly matter

Separate hype from reality with The State of Email Trends Report. Learn which trends to adopt and why.

How to dodge 5 common email marketing A/B testing mistakes

Okay, email geeks. It’s time to put your scientist hat on. For A/B testing, you need to think like a scientist—which means choosing what you test, when you test, and how you test very carefully. Making one of these common mistakes can blow the entire test, making you think you’ve nailed down a great email marketing strategy only to get stuck with the same—or worse—results in your next send.

Avoid common mistakes like:

1. Testing too much at one time

If you try to test more than one element of your email…how will you know which element directly impacted the outcome?

The answer is you won’t. So even though you might be tempted to swap out a subject line AND a CTA AND your design in the same test to pit two emails against each other, resist the urge! Careful, incremental testing will get you where you need to go. “If you want to test multiple elements, you can use multivariate testing, but it requires a very large audience to make it work,” says Espinal.

This kind of test-everything mentality often comes from a deeper issue: Not having a clear hypothesis in the first place. You must have a defined goal in mind, with the matching metrics to measure, to determine the winner. “Even if one version performs better, you won’t learn why without a strong hypothesis,” adds Espinal. “It should be an if/then statement, like ‘If we change this subject line, then we’ll see more opens because it creates a sense of urgency.’”

2. Running tests with too small a sample

When you do run a test, it needs to be with a statistically significant, randomized audience. That means it has to be large enough and random enough where you know for sure that whatever changes you made had an impact and you’re not nitpicking between decimal points.

“With only a few hundred contacts, the results won’t reach statistical significance, so the outcome is unreliable,” says Espinal. “You should aim for 10,000 people or more on a test if you can. Similarly, you can’t assume one version won just because it’s slightly ahead, like 22% opens for version A vs. 21% opens in version B. That’s not a clear winner.”

An A/B testing calculator can help determine the required sample size, and if the audience is too small, test only big, sweeping changes or use sequential tests to get a better sense of what’s working and what’s not. Aim for 95% confidence (p < 0.05) before declaring a winner, and if you’re not able to do that, run the test again.

Then, use your ESP to make sure it’s a random split, rather than testing existing segments (like a West Coast vs. East Coast audience split) to remove any potential bias from the differences in your audience. Otherwise, you won’t be able to isolate the variable you’re using against built-in preferences from your audience.

3. Funky test timing

You may be anxious to see the results, but an A/B test takes at least 48 hours to run its course, and even then, you may want to wait longer to achieve statistical significance. “It takes a while for people to get through their inbox,” says Espinal. “Make sure you’re allowing enough time for people to see the email before calling a winner.”

Early results can flip-flop wildly, so you risk a false positive on a variant without defining the test duration up front with your team. From there, it’s up to you to decide if you want to run a confirmation test with a future email or move forward with the results.

Similarly, make sure you’re testing outside of major promotions, holidays, or news events to avoid unusual behavior that can skew the results. “Unless you’re testing seasonal messaging, you should always try to run an A/B test under the conditions you want to replicate the results in,” says Espinal. “Unusual behavior won’t reflect normal campaigns, so you can’t use the results of that test.”

4. Forgetting about rolling out the results

You’ve put in all that effort to create the test—now make sure you’re documenting and actually using the results. “Log the hypothesis, outcome, and insight for every test,” says Espinal. “Otherwise, you’ll just repeat your mistakes. I keep a central A/B test log with test details and outcomes so I know what to do for my next campaign.”

Turn the insights that you get from your hard work into playbooks and guidelines as you move forward with future campaigns. That way, instead of following the same “best practices” that everyone uses, you know exactly what works for your audience—whether it’s shorter subject lines or chatty ones, sending on Tuesdays or Wednesdays, or making your CTA buttons black instead of orange.

| A/B Testing Mistake | Impact | How to Avoid It |

|---|---|---|

| Testing too much at once | It’s impossible to isolate what actually worked. | Test only one element of your emails at a time. |

| Using a sample size that’s too small | Without randomizing a large sample size, you won’t achieve statistical significance so you can’t choose a winner. | Test with random splits of 10,000 people or more. |

| Calling a winner too early or during “off” times | It takes time to get the results of a test and reach statistical significance so you can know which version truly won. | Wait 48–72 hours before calling the result. If there’s a major news event or holiday, wait to run the test. |

| Forgetting about the results | Failing to document and then roll out those changes defeats the purpose of running an A/B test in the first place. | Document all of your learnings and roll out changes in future email campaigns. |

Email trends that drive results

Dive into the State of Email Report for insights from marketers worldwide. Get the latest trends and best practices.

How to set-up and run effective email A/B tests

While email A/B testing is straightforward in theory, it can have a lot of moving parts. If you want to get accurate insights to share with your team, invest a little time planning and analyzing.

Below are the steps you need to take to run a successful (and insightful) A/B test.

1. Choose an objective

As with many projects, you need to start your email A/B testing with the end goal in mind. Choose your hypothesis, what you want to learn, or what metric you want to improve.

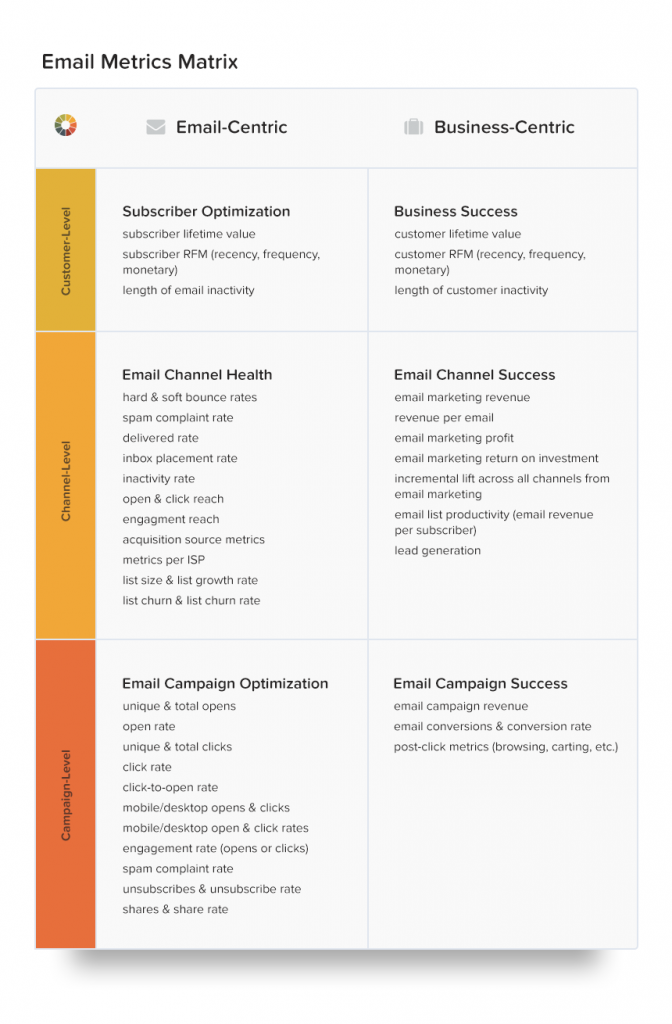

While you can use email A/B testing to improve campaign-level metrics like open rates, try to track the impact even further. For example, how does the conversion rate vary from the different emails? Since subject lines set expectations for the content, you might see their influence further than the inbox.

2. Pick the variable

Once you know what effect you want to have, it’s time to pick your variable component. Make sure you’re only testing one variable at a time. If there is more than one difference between your control and variable emails, you won’t know what change moved the needle. Isolating your email A/B tests may feel a bit slower, but you’ll be able to make informed conclusions.

3. Set up the parameters

The third step in your email A/B testing process has the most decisions. When you set up parameters, you decide on all of the pieces that ensure the test is organized. Your decisions include:

- How long you’ll run the test. You’ll probably be sitting on the edge of your seat waiting for results, but you need to wait up to a day for results to trickle in.

- Who will receive the test. If you want to A/B test within a particular segment, make sure you have a large enough audience for results to be statistically significant.

- Your testing split. Once you know which segments will receive the test, you have to decide how to split the send. You could do a 50/50 split where half gets the control, and the other receives the variable version. Or, you can send control version A to 20% and test version B to another 20%, then wait and send the winner to the remaining 60%.

- Which metrics you’ll measure. Figure out exactly which metrics you want and how to get the data before your test. How will you define success?

- Other confounding variables. Make a note of variables like holidays that could impact the test results but are out of your control.

4. Run the test

Email A/B testing is all about options, and that includes how you run the test – whether for optimizing your lifecycle marketing efforts or improving engagement in a single email marketing campaign. The two main ways to run the test are:

- Set up the A/B test in your ESP to run automatically. Letting your ESP manage the split-sending could be a little easier to manage and is a good option for simple tests (like email QA) with more surface-level goals like increasing open rates.

- Manually split the send. Setting up the two separate emails and manually sending the emails is more hands-on but can give you a cleaner look at data beyond your ESP (like website engagement). Use this method if you want to track results beyond the campaign. If you’re in the middle of an ESP migration, this can help with seeing performance and feature gaps.

5. Analyze the results

Once it’s time to analyze the results, you’ll be grateful for every moment spent carefully planning. Since you went in with a clear idea, you know what to look for once the test is over. Now you need to compare results and share them with your team.

Unless you want to test again. Listen, you don’t have to, but there is a chance that your variable email got a boost from the “shiny new factor.” If you want to confirm the first test results, you can run a similar test (with the same cohort!) to see if the learnings remain true. Then, document those findings and make it official for future campaigns.

Go beyond opens and clicks

Brands that use Litmus Email Analytics see a 43% higher ROI than those that don’t. See what you’re missing. Learn more.

What to A/B test in your emails

You can test pretty much everything in your emails, from layout to colors to your copy. “You may like the look of a white, sleek email, but it may not resonate with your audience because it doesn’t stand out. It’s interesting to test color variations, image variations, whether it’s a rounded or square CTA button, your personalization choices…you never know what can make a difference until you test,” says Espinal. “These are the tiny changes you can make that contribute to a healthy email marketing ecosystem.”

If you create it, you can test it. To get you started, here are ten common email components to A/B test:

1. The inbox envelope: from name, subject line, and preview text

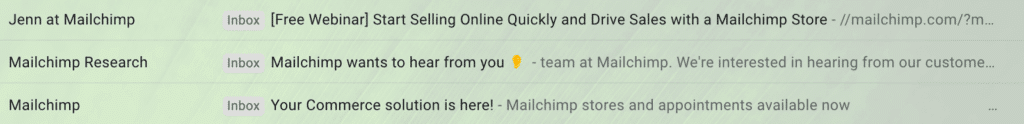

One of the elements that informs subscribers about an email (from the outside) is the from name. While you can experiment with this if you want, make sure it’s always clear that it’s from your company. Don’t try anything too off-the-wall that could feel spammy. For example, Mailchimp uses a few From names including “Mailchimp,” “Jenn at Mailchimp,” and “Mailchimp Research.”

If you want to increase open rates, the subject line is the most common place to start. You can experiment with different styles, lengths, tones, and positioning. For example, Emerson A/B tested two subject lines for a free trial email with a white paper:

- Control: Free Trial & Installation: Capture Energy Savings with Automated Steam Trap Monitoring

- Variable: [White Paper] The Impact of Failed Steam Traps on Process Plants

That particular test revealed a 23% higher open rate for the subject line referencing the white paper.

While the subject line arguably leads the charge in enticing a subscriber to open an email, it isn’t the only option you have. Testing your preheader or preview text can also boost open rates.

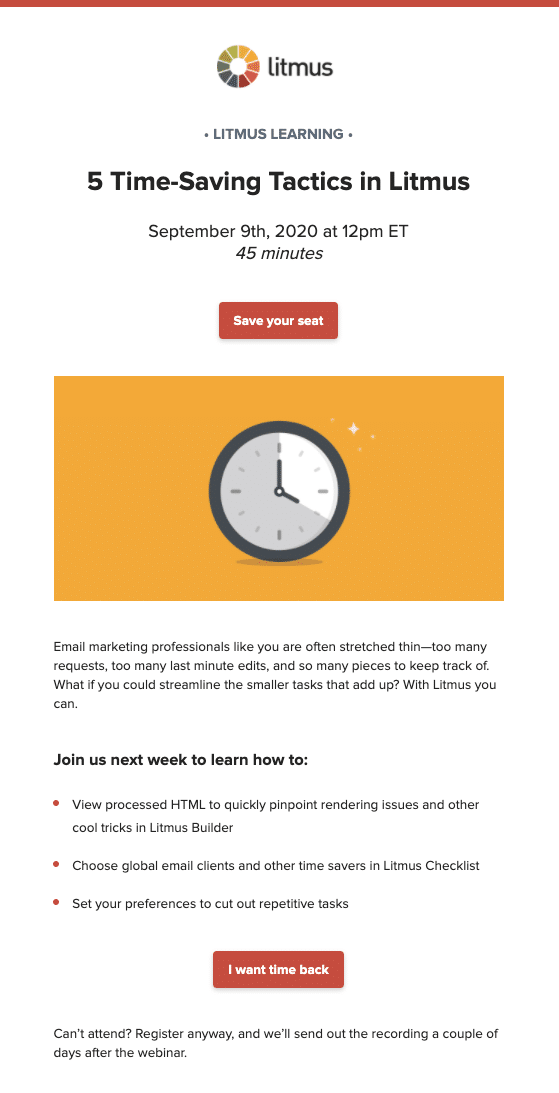

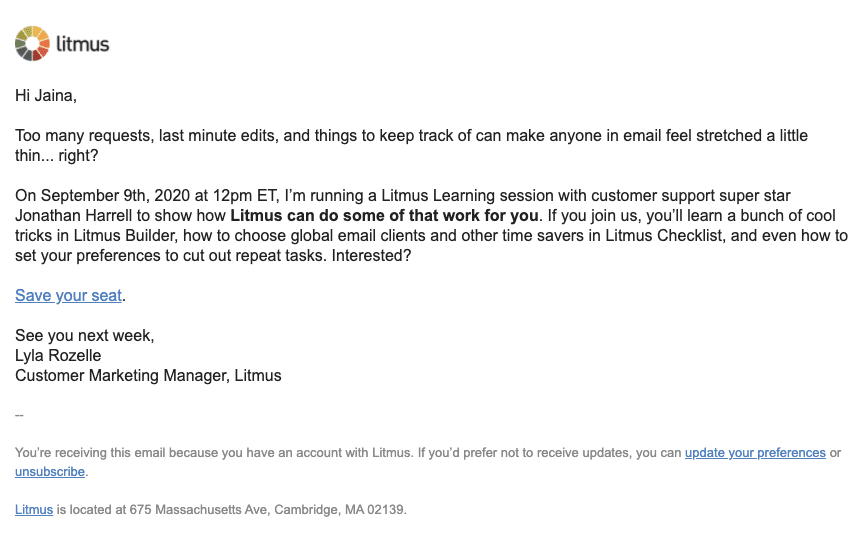

2. HTML vs. plain text

If you mostly send either HTML or plain text emails, it might be worth seeing if the grass is greener on the other side. Here at Litmus, we A/B tested these two email styles across a few segments. Through a few tests, we found that the best messaging varies between customers and non-customers and that plain text emails have a firm place in our email lineup.

HTML:

Pain text:

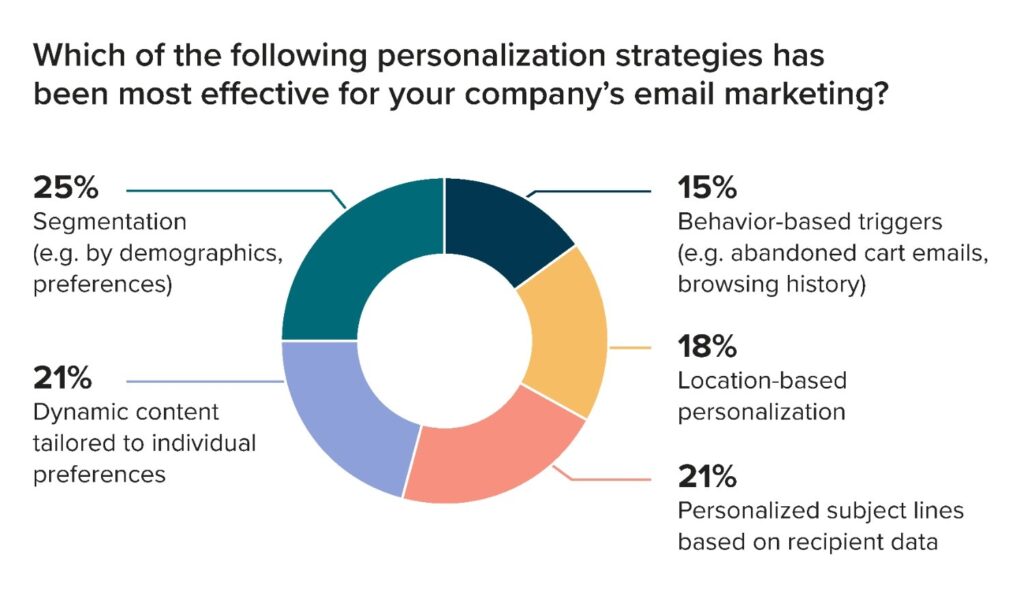

3. Personalization

“First name” is only one kind of personalization you can choose for your emails. While this method can increase clicks, you can expand your personalization horizons for your A/B tests, including dynamic content, interactive elements, or by adding (or removing) copy about a person’s location, previous purchases, customer status, and more.

When we asked email marketers for the 2025 State of Email Report what moved the needle the most with their personalization, dynamic content came in on top, just after your segmentation strategy. If you’re not sold on dynamic content yet, test it.

Espinal likes to test personalization more than any other element because it has the biggest upside potential. “One of the most exciting tests I ran at my previous company was a specific nurture for a product by different audience buckets,” she says. “We changed the body copy of the email and ran A/B tests against a control for our three segments. It’s extra work but can make a product launch that much more successful.”

4. Automation and timing

Most email A/B testing focuses on what goes in an email, but you can also test when to send it.

For example, you could adjust how long after a person abandons their cart before you send them a reminder. Another A/B testing method to try is how many emails in a triggered sequence you send.

That’s true for testing broadcast emails, but automated and transactional emails deserve testing and improvement, too. Often, these emails are the ones doing the heavy lifting of subscriber engagement, so it’s crucial to test these always-on emails.

5. Copy, imagery, and design choices

The tone and positioning of your email copy impact whether the message catches a reader’s interest or not. A/B testing in the “copy” category covers a ton of elements in your email, including body copy, headlines, and button copy.

In addition to the design of an email, you can play around with the length of the message. Here are a few questions to ask yourself:

- Do subscribers want more content and context in the message, or just enough to pique their interest?

- What length is ideal for different types of email? Or different devices?

- Do all segments prefer the same length of email?

If you use stylized emails, try A/B testing your visuals. Do different hero images change engagement? Can you use animated GIFs in longer emails to increase read time? Does including an infographic in an email make people more likely to forward it? The possibilities are nearly endless.

| A/B Testing Element | Why Test It? |

|---|---|

| From name, subject line, preview text | Changing one of these elements can impact your open rate. And we all know that you don’t get any clicks without opens. |

| HTML vs. Plain text | Get a sense of the tone and style of your email designs that resonate the most with your subscribers. |

| Personalization | Play around with how much to personalize your emails and which elements to personalize, like first name, geolocation, customer status, and dynamic product recommendations. |

| Automation and timing | Not just what you send but when you send it can impact opens and clicks. This goes for your automated and transactional emails, too. |

| Copy, imagery, and design | There are endless ways to find optimization wins with your design and copy by changing out headlines, body copy, CTA buttons, and more. |

When NOT to run an email marketing A/B test

There’s plenty of elements you can A/B test…but don’t confuse testing for taking action.

Espinal says, “Anytime you need to send a critical communication, or something very time-sensitive, that’s not the time to run a test. That includes transactional emails, like a confirmation email or shipping confirmation. Put your energy elsewhere.”

The most important thing to keep in mind? That whatever test you run can be repeated. So if you’re thinking of doing an A/B test on a random, ad hoc email campaign because you read this article and think you “should,” don’t worry! You don’t have to test every single campaign that you run. “It’s all about taking that data and replicating that moving forward, so if there’s any part of the campaign that you just can’t do that with, save the A/B test for another time,” adds Espinal.

Enterprise email, simplified

Power your enterprise email strategy with advanced testing, automation, and security to scale effortlessly.Learn more.

How top brands use email A/B testing to drive results

What does A/B testing look like in action? Here’s how top brands use it in their regular email marketing cadence, as told to our email geeks at Litmus Live a few years back:

1. Square does incremental testing to isolate their experiments

Tyler Michael, from Square, uses A/B testing incrementally, changing one aspect of their nurture sequences and newsletters at a time so they can isolate exactly what worked or what didn’t. He recommends traditional elements to test, but he often focuses on timing dimensions you can A/B test like:

- Sending delay

- Timezone localization

- Day of the week

“If you’re ever surprised by the results, run the test again,” he advises. “There are several elements that can impact the validity of your results, like seasonality (holiday vs. summer), execution (load times or errors), or timing (day of week). The longer you run an experiment, the more likely your significance will stabilize.”

2. Intuit built a rapid email experimentation framework

Rian Lemmer and Kate Tinkleburg from Intuit recommend failing fast and savoring the surprises as much as possible for their email marketing program. “We’re talking about moving a couple of hours from idea to experiment in the ‘doing’ phase as fast as possible. Sometimes we even set aside our regular jobs to take a couple of days just doing experiments so we can iterate more quickly and build momentum,” says Lemmer.

“Most importantly, it needs to have real metrics behind it,” adds Tinkleburg. Surveys take too much time and introduce bias, which is why A/B testing allows them to get from A to Z faster. “You need to be able to see their behavior and make changes based on that. With email we have plenty of metrics you can use, but you need real customer currency so they have skin in the game or willingness to pay for the experiment to be worthwhile,” she says.

3. Indeed takes A/B testing further down the funnel

Lindsay Brothers, a product manager at Indeed, believes that experimentation is the best way to learn. “We’re often wrong,” says Brothers. “Job alerts, our most popular email list, hadn’t been tested for years. We tested the copy for the CTA of our form and absolutely no one thought the winner would win. More importantly, every single one did better than the control. We only stood to gain by testing this!”

You’d think it would be something like “subscribe,” or “sign up,” for their CTA to be as clear as possible, but the winner was “activate.” They increased their email signups by testing the form by 12%—a huge win over time, and the beginning of A/B testing other aspects of their email marketing program beyond what’s in the email itself.

Check out the full story here →

Learn from the best Your favorite brands use Litmus to deliver flawless email experiences. Discover the ROI your emails can achieve with Litmus.

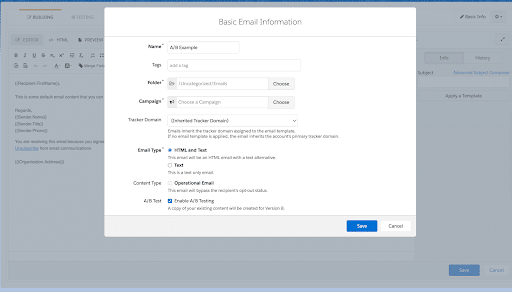

Popular email marketing A/B testing platforms

You can find A/B testing in pretty much any ESP these days, though the additional features will vary. For example, at Litmus we use Marketing Cloud Account Engagement (formally Pardot). Here’s what our setup looks like when we run an A/B test:

When you’re comparing different A/B testing options within your ESP, consider:

- Does it allow you to randomize the audience? This is key for making sure you get a statistically significant result.

- How easy it is to create two versions of the same email to send at the same time, especially if you’re doing something more complex than subject line tests.

- Whether you can turn off send time optimization so you’re eliminating as many timing factors as possible—unless, of course, you’re testing timing.

- How the ESP packages up the “winner” variant with additional insights, or if it uses AI to speed up the A/B testing process.

- Integrations the platform has with other analytics tools, like Litmus Analytics, so you can combine your pre-send testing with your A/B testing to optimize your email marketing program even further.

Whether you use HubSpot, Constant Contact, Salesforce Marketing Cloud, or something else, check to see what kind of A/B testing features are available in your ESP. If you’re not happy with what your ESP has to offer, a tool like Optimizely, Hotjar, or Crazy Egg can help you. Though originally designed for landing pages, they have some features you can use—like a probability calculator—to run your A/B tests on email campaigns.

Steps before you launch your first email A/B test

Before you start your first A/B test, here’s a summary of everything you need to know about email A/B testing:

| Step | Why? | For example… |

|---|---|---|

| Choose an objective and make a hypothesis. | You should only test one element of your email at a time, and measure it against the appropriate metrics. | Subject lines / open rates |

| Randomize your audience and choose a large enough sample size. | You need to achieve statistical significance and probability confidence (p<0.05) to declare a “winner.” | 10,000 or more audience members, random slice |

| Run the test. | This will help you make adjustments to future campaigns. Be sure to choose a campaign you can replicate. | Variant A: CTA button color (default, what you always do) Variant B: CTA button color (new) |

| Analyze the results, document your findings, and roll out changes to a future campaign. | The value in A/B testing comes from choosing a winner and moving forward with those insights. | Example: Variant B (new CTA color) won. The next email has the new CTA color. |

Take the guesswork out of email testing with Litmus

All ESPs give you access to the same standard email metrics, and you can also tap into things, like web analytics, to understand behavior outside of the inbox. If you want to dig deeper into your A/B tests with advanced metrics, Litmus can help you do more pre-send testing, including email QA tests.

Here are just a few use cases where Litmus Email Analytics could improve your insights:

- Compare the read rates between variations, not just open rates, to see which subject line version attracted the most engaged subscribers.

- Understand which content leads to the highest share rate so you can leverage learnings to grow your referral program.

- Analyze A/B test results between email clients and devices, particularly if what you’re testing doesn’t have broad email client support.

Once you have your advanced analytics reports and findings, Litmus makes it easy to share the results with your team. Then, you can optimize and drive strategies in email and the rest of your marketing channel mix.

Send with total confidence

Preview emails in 100+ clients, catch errors, and ensure accessibility. Cut QA time in half.

Kayla Voigt is a B2B Freelance Writer.